Part 2 of 3: Framework-by-framework details

Last updated: April 2026. Every fact in this document is sourced from published materials such as GitHub releases, official documentation, peer-reviewed papers, or reputable press. Where we could not find published information, we say “not published.” The landscape of frameworks, APIs, and MCP servers is moving faster than the publishing cycle; if we wait for a static industry standard, we’ll be documenting history rather than helping architects build the future. We welcome corrections and suggestions.

The Journey So Far

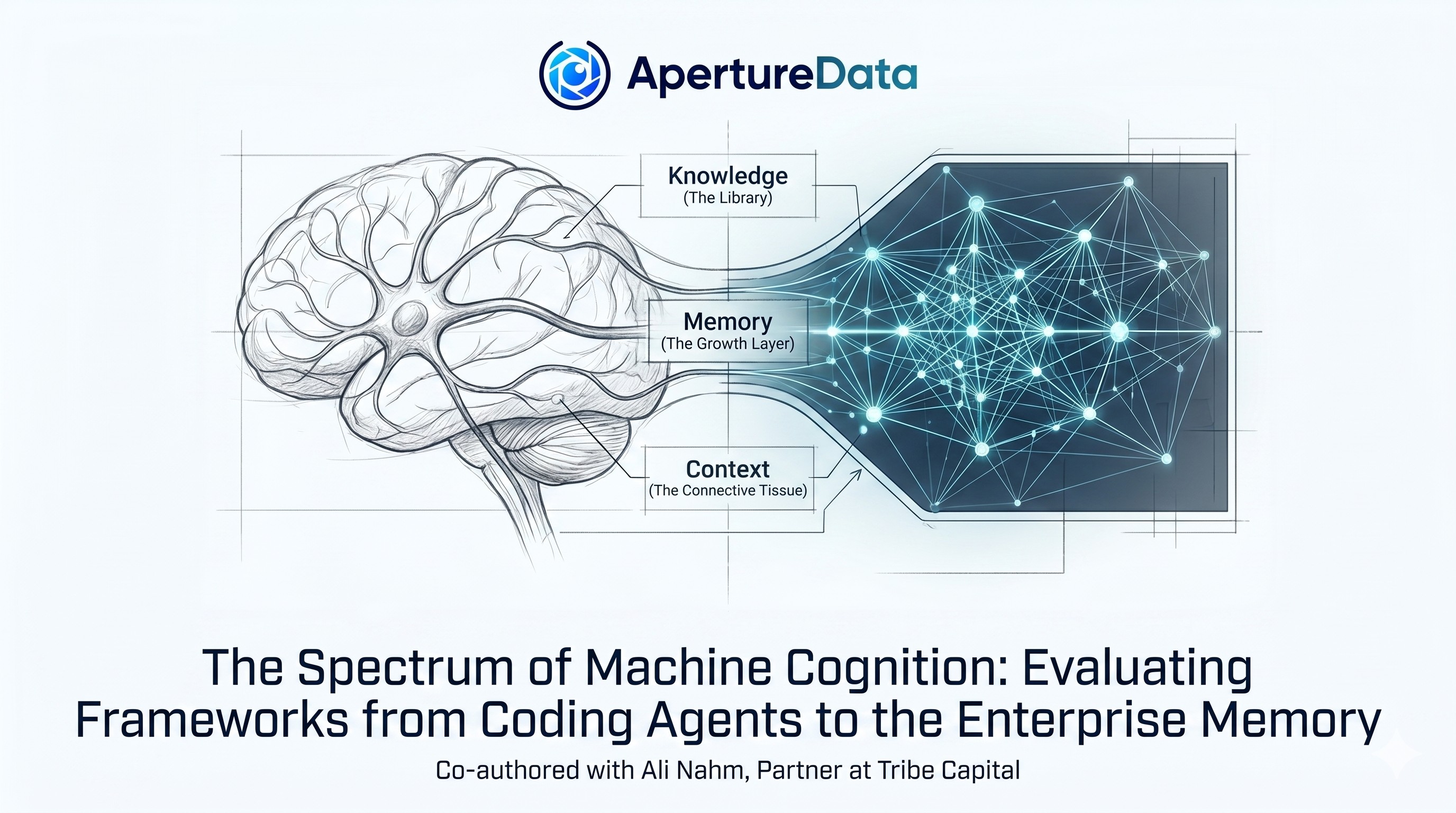

In Part 1 of this series, we explored the "Human Blueprint" for AI memory in the context of organizations, the idea that for agents to truly reason, they must mimic the way the human brain balances knowledge, learning, and experience. In Part 2a, we introduced the KMC (Knowledge-Memory-Context) Blueprint to help define the three distinct layers required for an AI to “find”, “learn”, and "understand" so it could “think” like a colleague.

Now, it is time to move from theory to the technical landscape.

Recap: The Methodology of Machine Cognition

This post provides a data-heavy deep dive into 20+ of the most influential frameworks in the 2026 market. By evaluating these tools against the KMC standard, we can see exactly how the industry is maturing from simple "data storage" to sophisticated "Machine Cognition” for organizations to deploy their AI on top of.

Note on Research: Special thanks to Ali Nahm (from Tribe Capital) for her guidance in broadening our evaluation criteria and providing the critical feedback that helped refine our research methodology for this study.

How We Group the Frameworks

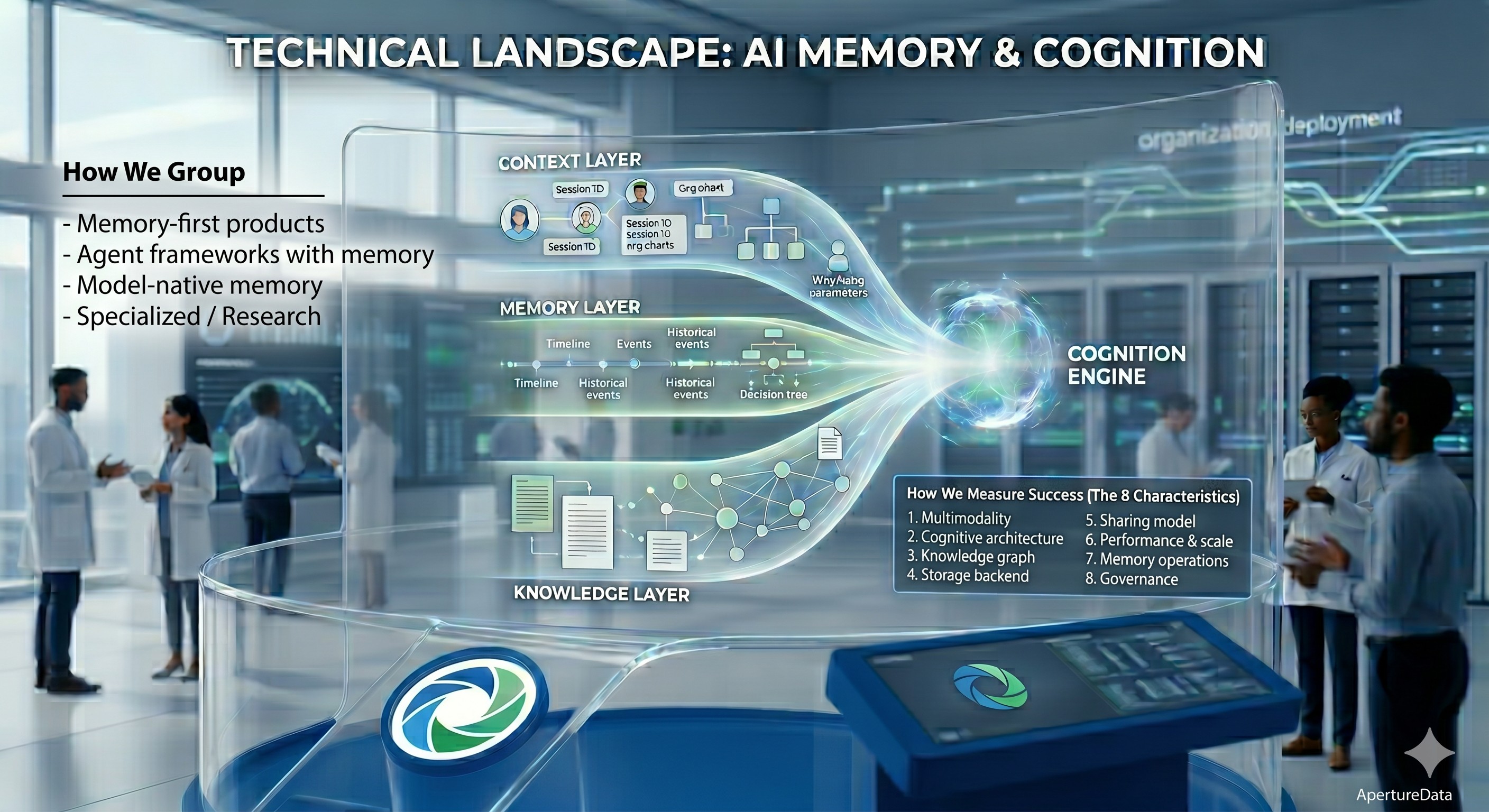

This framework-by-framework analysis spans four categories:

- Memory-first products: memory is the core value proposition. There is a variant that’s a memory-native agent that’s also included here. Included frameworks: Aperture Nexus, Cognee, Hindsight, Letta, Mem0, MemMachine, Memories.ai, Prem Cortex, Rippletide, Sentra.app, Supermemory, Zep (Grafiti)

- Agent frameworks with memory: memory is one feature among many supported for multi-agent execution. Included frameworks: Agno Memory & Dash, Crew AI, LangGraph, Lyzr Cognis, Mastra

- Model-native memory: tied to a specific model provider; not easily portable. Included frameworks: Claude memory, OpenAI memory

- Specialized / research: not production-ready; included for architectural influence. Included frameworks: MemPalace, MemoryOS

How We Measure Success (The 8 Characteristics)

Each framework is evaluated against the same eight characteristics that we described in detail in the previous blog. These metrics represent the bridge between a simple database and a cognitive system that can deliver Correctness, the right answer at the right time. The summary editorial explains why each one matters for organizational (human+) intelligence.

- Multimodality: what input types are natively stored and searchable (not just processed and discarded)

- Cognitive architecture: supported memory capabilities; whether Context (who/why/session/org) is a first-class concept

- Knowledge graph & search: ability to incorporate existing knowledge and relationships among concepts (graph structure), retrieval modes, query support

- Storage backend: method of persisting data; critical for performance at organizational scale.

- Sharing model: how memory is scoped and shared: user / session / team / org / cross-agent

- Performance & scale: published benchmarks and scale, latency, and throughput.

- Memory operations: How the system handles new information—extraction, temporal invalidation, and conflict resolution (reconciling new facts with stale data).

- Governance: security, privacy, if historical state can be preserved, is queryable, and can be deemed immutable if necessary?

A Note on Benchmarks

Several AI memory vendors publicly report benchmark results, but they tend to emphasize different metrics and different benchmark families. The most common public reporting we found is around LongMemEval, LoCoMo, ConvoMem, and a few others, with some vendors also publishing latency, token efficiency, or end-to-end response-time numbers alongside accuracy. The dominant pattern is that vendors report quality benchmarks first, especially whether a system remembers facts correctly over long chats, handles temporal updates, and remains consistent across sessions. Some vendors then add systems metrics like latency or tokens per query, because those matter for production use and can change the practical ranking. For organizational intelligence, scale, response time, throughput matter almost as much as quality of responses in order to enable production deployments. We report on the details we were able to find and hope any missing details will be completed over time by the various vendors.

Detailed Analysis

Memory-First Products

(in alphabetical order)

Aperture Nexus

aperturedata.io · GitHub · Open source, built on ApertureDB, early dev release

(Full disclosure: This study was conducted by the team at ApertureData)

Enterprise cognition layer (following the KMC human blueprint laid out earlier), built by ApertureData on top of ApertureDB, a purpose-built multimodal database with production deployments at enterprise scale. The framework is early-stage but growing rapidly; the storage infrastructure underneath is heavily tested in production.

Characteristics We Know

- Multimodality: Text, images, video, audio, documents, all first-class with embeddings, since it relies on ApertureDB and multimodal models provided by users. A text query retrieves an image natively, not a text description of it.

- Cognitive architecture: Implements infrastructure to support the KMC model (Knowledge, Memory, Context): Knowledge (existing organizational knowledge), Memory (learning layer), Context (who/why/session/org, a first-class graph entity). Models to process or reason about the data are currently configured by the users. It can enable scenarios where one agent can learn customers’ preferences from a chat session and it’s update in the org-wide memory can enable a marketing agent to target the user with the right content.

- Knowledge graph & search: Native ApertureDB knowledge graph + vector hybrid search. Context, Session, and Principal entities are graph nodes. User, session, timestamp properties on all content enable precise targeted search / updates / pruning. Complex graph traversal to find related information. Existing knowledge base can be pre-populated.

- Storage backend: ApertureDB (ApertureData’s own purpose-built multimodal database). Not pluggable to other backends by design, the storage guarantees of unified search, multimodal-native operations are part of the product.

- Sharing model: aperture-nexus treats "Principal (user) → department → organization", timestamp, and other relevant attributes (user can define) as first-class graph entities, creating rich context for users to build upon. Structural hierarchy: Principal (user) → department → organization. Permissions enforced at the Memory boundary — Memory is the only component that writes, so enforcement is consistent. Context carries session, org, purpose, and restriction attributes.

- Performance & scale: Storage: sub-10ms vector search, ~15ms graph queries at billion-scale (ApertureDB published benchmarks). Framework-level retrieval benchmarks: not yet published: aperture-nexus is early-stage.

- Memory operations: Explicit API: commit() (raw storage, returns commit_id), process_and_commit() (model calls + storage), connect() (create relationships), remove() (by commit, context, session, timestamp, or search results). Automated consolidation and conflict detection: planned for v2. Future versions will introduce compression, other multimodal memory interpretations such as object recognition and commit etc.

- Governance: Upcoming versions talk about user, team, project, organization wide sharing logic implemented through graph support. DAta can be immutable if needed. Every entry carries context and data can be retrieved in a given context. Full audit trail , RBAC, and monitoring by design.

Aperture Nexus is an enterprise-focused AI memory or cognition layer that is unique in its support for multimodality and vector-graph search without requiring multiple storage backends. It’s designed and is being developed with the KMC blueprint in mind. While its underlying backend, ApertureDB, is proven in production, Nexus itself is in its early stages (with a promising roadmap), and is missing quality retrieval benchmarks or a broader user base.

Cognee

cognee.ai · GitHub · Open source + managed

Cognee helps you build AI memory with a Knowledge Engine that learns. You can use it to build personalized and dynamic memory for AI Agents.

Characteristics We Know

- Multimodality: 38+ data source types for ingestion. Text-first. Some other document and image types are listed but they are converted to text to be queried for memory search.

- Cognitive architecture: Graph-native. Memify pipeline builds a self-improving knowledge base. Skills system (v0.5.4, Dec 2025): agents acquire reusable capabilities. 14 retrieval modes.

- Knowledge graph & search: It combines semantic vector search with a graph store so it can answer by meaning and by relationship. It also emphasizes entity resolution and connecting concepts across sources, which is useful for enterprise knowledge and multi-hop reasoning. Ontology can be specified to make the entity and connection names relevant.

- Storage backend: Pluggable: multiple graph, relational, and vector store backends.

- Sharing model: Per-user / group / public scoping. JWT and API key auth.

- Performance & scale: Cognee’s benchmark results are strong on answer quality and reasoning, especially for multi-hop QA. On its 24-question HotPotQA subset with 45 repeated runs, it reports Human-like Correctness of 0.93, DeepEval Correctness of 0.85, F1 of 0.84, and Exact Match of 0.69. Overall enterprise performance depends on how well their chosen backend stores are deployed, tuned, and operated together.

- Memory operations: Automated consolidation via Memify. Importance-weighted ingestion (v0.5.7). Skills system for reusable agent capabilities.

- Governance: Security, privacy, logging supported in local and cloud mode. Unclear on monitoring support. Fully GDPR-compliant. Data is encrypted at rest and in transit. Made for air-gapped enterprise deployment.

Cognee aligns with the human model of conceptual abstraction, successfully using an automated "cognify" pipeline to transform raw data into a structured knowledge graph that mimics institutional reasoning. Due to its extraction of text information from different modalities of data, it can lose valuable insights captured in those data types like emotions. The reliance on external backends introduces more dependencies in scale / performance even though its memory abstractions are shown to perform great human matching.

Hindsight

hindsight.vectorize.io · GitHub · Open source (MIT) + managed

Memory layer built by Vectorize. Mathes our KMC blueprint quite closely with their notions of mental models, observations, world facts, and experience facts.

Characteristics We Know

- Multimodality: Text and conversational data. Other modalities: extract text and store the objects but loses per-modality specific details e.g. tone in audio/video, emotions in image. Objects are backed in file system.

- Cognitive architecture: Three operations: Retain (push memories), Recall (search), Reflect (generate new observations and insights from existing memories). Matches the human model of thinking and operating.

- Knowledge graph & search: Entity graph + vector search. Specific graph backend: Graph connections realized through CTEs.

- Storage backend: PostgreSQL flavors (local and cloud hosted), file system.

- Sharing model: Not published. MIT license enables self-hosted enterprise deployment. Compliance certifications: unknown.

- Performance & scale: LongMemEval: 91.4% with Gemini 3 Pro (highest published result in this landscape, as of March 2026). With 20B open-source model: surpasses full-context GPT-4o (60.2%). BEAM benchmark at 10M tokens: 64.1%. Storage backend performance will depend on Postgres scaling but graph can be slower when realized through CTE. Large scale tests will show scaling factors.

- Memory operations: Retain, Recall, Reflect. Queries pull temporal, graph, semantic, keyword in parallel, rerank and cross encode for results. Various LLMs can be plugged in for the final result.

- Governance: Comprehensive telemetry through Prometheus, Grafana in cloud platform, role-based access for teams.

Hindsight from Vectorize matches the human model we have laid out for enterprise operations but the backend choices could create problems particularly for the graph search queries. It is unique in combining the four key methods of access for every query but loses out a lot of details due to its limited multimodal support. The integration with the existing knowledge base could pose challenges.

Letta

letta.com · GitHub · Open source + managed cloud.

Commercial spin-out of MemGPT, an academic project from UC Berkeley’s Sky Computing Lab. Letta Code, now their focus, is a model-agnostic agent harness with persistent memory. This could belong in Agent Frameworks now due to focus on Letta Code but it’s still memory-first agents, so we keep it here.

Characteristics We Know

- Multimodality: Coding agents synchronize files but memories are primarily text.

- Cognitive architecture: With Letta Code, you use the same agent indefinitely - across sessions, days, or months - and have it get better over time. Your agent remembers past interactions, learns your preferences, and self-edits its memory as it works. With Claude Code or Codex, every user gets the same agent that acts identically. With Letta Code, you can deeply personalize your agents to be unique to *you*. It can selectively load skills from other projects. It’s notion of context is very much tied to a user and all the work they need to do.

- Knowledge graph & search: Memory is organized as a set of files in a folder hierarchy, not a graph-based search in the traditional sense but it can stage access to information across various file levels.

- Storage backend: Letta Code agent’s memory is organized into a git-backed context repository called MemFS (short for “memory filesystem”), which consists of folders of markdown files.

- Sharing model: Session-scoped. Conversations API enables shared memory across parallel sessions. No org/department hierarchy published.

- Performance & scale: Letta Code claims strong TerminalBench performance and compares favorably with provider-specific coding harnesses, while still being model-agnostic. it doesn’t answer how the underlying storage and state layer behaves at enterprise scale.

- Memory operations: Agent-driven explicit operations on memory blocks — agent decides what to store and when. In-context summarization and paging. All files inside the top-level directory system/ are pinned to the agent’s context window, and all memories outside of system/ are visible to the agent in the memory tree, but the full contents are omitted. When memory subagents (such as the reflection subagent – dream phase) run, they modify the memory git repo using git worktrees, allowing for parallel subagents to modify an agents memory at the same time.

- Governance: Memory is mutable since agents overwrite core memory blocks. You can use git for histories and monitor agent performance. It supports RBAC. Audit logging is unclear.

Letta Code and Letta Code SDK are great for personalized, and now primarily coding agents. Searching through Git in a personalized context is pretty easy and can extend to working with teams in an organization. However, this does not support Cognition broadly and is less performance or scale sensitive at a global scale.

Mem0

mem0.ai · GitHub · Open source + managed SaaS

Purpose-built memory infrastructure layer for AI applications. Open source with a managed cloud offering and enterprise tier. Mem0’s "Extract+Decide" makes it more than just a filing cabinet for information.

Characteristics We Know

- Multimodality: Primarily text. Create text memories from images / docs without storing them as native multimodal objects. Audio/video not supported.

- Cognitive architecture: Long, short term memories available. Simple Context model (session purpose, org, why) in place and can govern what is shown to the user. Memory retrieved is ranked based on its recency and can be ranked based on relevance through graph.

- Knowledge graph & search: Hybrid: vector + entity graph (Neo4j or Kuzu) + KV store. Graph captures entity relationships. Semantic search + filtering. No graph traversal at query time.

- Storage backend: Pluggable: 20+ vector store providers. Graph: Neo4j or Kuzu. KV store layer for fast fact lookup. Managed cloud or self-hosted options available.

- Sharing model: Multi-tenant organizations and projects with membership-based access control, scopes memory by user, agent, run/session, and app, which helps keep one team’s or one agent’s context. Gets harder though if each team chooses different storage backends.

- Performance & scale: LOCOMO benchmark: Mem0g (graph) 68.4% at 2.59s p95; Mem0 (vector-only) 66.9% at 1.44s p95 (ECAI 2025, arXiv:2504.19413). 186M+ API calls/month (vendor). 91% faster than full-context; 90% fewer tokens (vendor). Overall system performance and scale will depend on chosen storage backend and workload intensity.

- Memory operations: Extract+decide: LLM classifies each incoming fact as ADD (new), UPDATE (modify existing), or SKIP (duplicate). Prevents unbounded accumulation of conflicting records. Tagged / timestamped memories.

- Governance: SOC 2 and HIPAA readiness, encryption in transit and at rest, audit logging for memory operations, memory isolation, TTL/expiry policies, and forensic snapshots. However, more backends usually means more places for policy drift, audit gaps, and access-control mistakes.

Mem0 looks strong as a memory abstraction, but multi-backend flexibility is not automatically a plus for enterprises. It supports a lot of our KMC requirements minus multimodality for true human-like memory.

MemMachine

memverge.ai · memmachine.ai · Open source core + enterprise commercial

AI memory layer built by MemVerge, MemMachine’s memory layer persists across multiple sessions, agents, and large language models, building a sophisticated, evolving user profile. It transforms AI chatbots into personalized, context-aware AI assistants designed to understand and respond with better precision and depth.

Characteristics We Know

- Multimodality: Text-focused. Other modalities are not the focus

- Cognitive architecture: Three memory types: episodic (conversation history), personal (user-specific facts), procedural (how-to knowledge). One of few frameworks to explicitly claim procedural memory. Persistent across sessions, models, agents, and environments. It supports rich user context around preferences, goals, sentiments, products used.

- Knowledge graph & search: MemMachine uses graph store capabilities to represent connected episodic memories, which makes relationship traversal and context expansion a core part of the design. It supports derived relationships, multi-hop contextual recall.

- Storage backend: It is graph-first for episodic memory and SQL-first for profile memory. Allows plugging in of certain databases like Neo4j, NebulaGraph, Postgres.

- Sharing model: It supports users, agents, assistants, and an MCP server, which suggests shared memory access across applications and workflows. The open question is how strong the tenancy and permission model is for large organizations, because that is not as visible in the public description

- Performance & scale: MemMachine’s performance is strong on retrieval quality and competitive on speed, especially for long-term conversational memory. It reports top-tier LoCoMo scores and claims roughly 80% lower token usage and up to 75% faster add/search operations versus comparison systems like Mem0. The backend setup will determine the overall performance.

- Memory operations: Supports smart query routing for agents and classic operations: ingest, store, retrieve, and maintain/deduplicate different memory types over time. They do not talk about compacting, removing memory.

- Governance: They offer metrics and telemetry, logging support. A version with enterprise support will be available later.

MemMachine offers a good memory alternative however its performance and scalability is dependent on the configuration of the backend database. For supporting more human-like memory in a more general context, it’s missing support for multimodality and its focus is more around a more comprehensive coding agent.

Memories.ai

Memories.ai. AI visual memory. SaaS + VPC

Memories.ai is a visual memory layer for enterprises that turns massive video archives into searchable, long-term visual “memory atoms” with AI retrieval and incident-detection tools.

Characteristics We Know

- Multimodality: memories.ai is video‑first, ingesting video, audio, and metadata rather than generic text, image, and PDFs; it is optimized for visual‑scene‑based understanding, not broad‑content‑RAG.

- Cognitive architecture: Its core is the Large Visual Memory Model (LVMM) plus video-to-index compression, so AI can “see once, remember forever” across huge archives instead of just short-term frame sequences. They construct context when persisting information to indicate where video came from and timing so you can retrieve relevant information in your searches but the context is not around who did what for what reason in a traditional enterprise sense of usage.

- Knowledge graph and search: It turns video into structured “memory‑atom” embeddings that support semantic‑style search (e.g., “slip‑and‑fall on aisle 3”) directly from hours‑long feeds, closer to a visual‑fact‑graph than a simple frame‑search.

- Sharing model among teams: It targets security, retail, and robotics teams, supporting multi‑location, multi‑user access to video histories, but public docs focus on functions rather than explicit tenancy or permission‑schema detail.

- Performance & scale: MARC reports 95% visual-token reduction, 72% lower GPU memory, 23.9% faster generation latency, and 4.71x–5.91x tokens/sec gains. X-LeBench is about long-horizon egocentric video understanding and shows that temporal-localization is still hard, but retrieval-based approaches improve recall and summarization versus naive long-context handling. One additional note we found was that the system outperforms competitors by up to 20 points on benchmarks like MVBench and NextQA, with ultra-low hallucinations thanks to its memory-centric design.

- Memory operations: “Memory” here means visual‑memory operations: ingest, compress, index, and retrieve event‑based video knowledge rather than text‑message‑level add/update/delete; tools like Clip Search and Video Chat rely on this layer without exposing raw memory primitives.

- Governance: Videos are ingested and compressed on-device or in the cloud, reducing data to essential insights without storing raw footage indefinitely, addressing privacy concerns. GDPR and SOC2. It is enterprise‑oriented around security and operations, with incident‑detection and evidence‑ready workflows, but it is more incident‑audit‑focused than a generic, policy‑and‑retention‑driven cognition‑governance platform. No facial recognition.

memories.ai is a visual-fact-based knowledge engine: it stores what the AI “saw,” when, and where, and then lets you ask questions about events rather than raw footage. The memory is continuous, scene-aware, and temporal, shaping context around physical-world incidents (slip-and-fall, people-tracking, behavior) rather than document-retrieval, while multimodality is real but tightly bound to video/audio metadata, not general-content ingestion. The behavior and hence the overall performance at scale of the storage backend is unclear for enterprise workloads.

Prem Cortex

premai.io · GitHub · Open source

A memory system from Prem Labs for AI agents that stores, retrieves, and evolves information over time. Inspired by human cognitive architecture with dual-tier memory (STM/LTM) and intelligent evolution capabilities.

Characteristics We Know

- Multimodality: Text only. Image, audio, video: listed for future improvement.

- Cognitive architecture: Cortex is built around human-like memory: short- and long-term memory that auto-organizes into “smart collections” and evolves over time. It emphasizes temporal awareness and self-organizing groupings, so agents can retrieve context by time, topic, and relationship instead of a flat memory dump, especially when memory collection grows more complex. Context in the sense of which user, which environment, why a decision is missing. Existing knowledge base is also not captured.

- Knowledge graph & search: Knowledge graph with typed relationship edges and confidence scores though no graph database is used in the backend. Temporal hybrid retrieval (semantic + recency weighting) is accomplished through filtering in ChromaDB.

- Storage backend: ChromaDB server needs to be running in the backend when using this memory.

- Sharing model: Smart collections support shared domains (e.g., work.finance), but clear tenancy/permissions are not visible in public docs.

- Performance & scale: Strong on LoCoMo: near-top scores at ~60% fewer tokens than full-context, with 2–8 s latency for rich queries; good for deliberative agents, not real-time UI.

- Memory operations: Rich ingest, categorization (~500 ms), evolution (~1–2 s), and collection-aware query-rewriting; powerful but ops-heavy. You can attach weights to recency vs semantics when retrieving.

- Governance: Little explicit mention of audit, retention, or permissions; best treated as a flexible but self-governed memory layer.

Cortex is a smart, graph-aware, token-efficient memory layer that trades latency and backend cost for richer, organized, long-term memory; strong for pilots and deliberative agents, but not yet a fully-managed, enterprise-governed platform. It also misses out on being a more human-like memory due to lack of multimodal support.

RippleTide

rippletide.com · Enterprise SaaS · Reasoning

Enterprise agent decision infrastructure built around a Hypergraph Decision Database — not a vector store but a structured reasoning layer that binds facts, rules, policies, and causal relationships. Every agent decision is validated deterministically and produces a full audit trace for enterprise trust.

Characteristics We Know

- Multimodality: Text and structured data. Rippletide is not a general multimodal memory product; it focuses on structured decision-making context (facts, policies, workflows) rather than handling images, audio, or broad content ingestion.

- Cognitive architecture: Rippletide’s architecture is a decision-context graph and policy enforcement layer that sits between agents and production systems, not an agent-cognition layer.

- Knowledge graph & search: It uses a hypergraph model to represent facts, rules, policies, and causal traces, then reasons deterministically over those before allowing an action to execute.

- Storage backend: Hypergraph Decision Database (proprietary). Integrations: AWS Bedrock, LangChain, CrewAI. MCP-compatible.

- Sharing model: Enterprise governance layer. Specific user/team/org scoping: unknown.

- Performance & scale: Claims: <1% hallucination rate, 100% guardrail compliance in production (vendor — independent verification not found). Rippletide's hypergraph engine is optimized for low-latency decision evaluation, processing complex multi-constraint queries in milliseconds (50 msec). It scales horizontally to support thousands of concurrent agent sessions without degradation.

- Memory operations: The core is a decision context graph (hypergraph) that tracks applicable, temporal, and scoped facts, not just free-text retrieval. It cares about “applicability over retrieval” and temporal validity, so it is more policy-aware and deterministic than classic vector-based memory or RAG systems.

- Governance: Policy violations and hallucinations are intercepted before execution. Every decision is logged with a complete causal trace — which data, which rule, which outcome, and why — making it regulator-ready and board-ready. SOC 2 Type II certified, GDPR and CCPA ready, with EU-resident servers, end-to-end PII encryption, and row-level access controls.

Rather than it being a cognitive engine layer, Rippletide fits better as the layer between fetching the input that agents use to reason and giving answers to the user – where validations and fixes can be put in place.

Sentra

sentra.app · Enterprise SaaS

Organizational memory layer focused on capturing decisions, commitments, and context from meetings and workplace communications. Targets team-level institutional memory rather than agent-to-agent or individual memory.

Characteristics We Know

- Multimodality: Sentra focuses on meeting audio, chat, and structured work‑tool data rather than a broad “media library” of images, video, or sensor streams. Its multimodality is mainly about connecting spoken decisions with written and code‑based artifacts, not about general‑purpose multimedia memory.

- Cognitive architecture: The core is an organizational memory system that builds a “collective mind” from meetings, Slack, email, GitHub, and calendars, then uses LLMs and Reflexion‑style reasoning to maintain a consistent state over time. It emphasizes continuity of thought and generative memory over simple retrieval‑only logic.

- Knowledge graph & search: Sentra constructs a governed knowledge graph that links decisions, commitments, and trade‑offs, with embeddings that track temporal and permission metadata. Search is not just “find docs” but “find the why and the who behind decisions”.

- Storage backend: Sentra.app likely has a fairly traditional backend, and filesystem/object-storage patterns are plausible, but the docs do not prove a filesystem-only design. The site talks about capturing, organizing, and syncing company memory across tools, which usually implies a mix of database metadata plus file/object storage rather than just raw files.

- Sharing model: Sentra is explicitly built for cross-team continuity: it unifies timelines across teams, enforces permission-aware retrieval, and surfaces decision-drift to leaders and new hires. It supports multi-team and multi-level orgs. You can also specify what it should not index and resurface.

- Performance & scale: Relies on research that replacing frontier models with a 50x smaller model drops F1 by only 0.07, while retrieval architecture optimizations contribute +0.112 F1. Operational Reinforcement introduces Monitor MDPs for structured failure feedback, exact credit assignment by design, 300-900x memory advantage over reward machines. Avoidance Learning shows that substantive LLM behavior emerges from pure negative feedback, with 80% fewer evasive responses, and counter-intuitively, adding positive rewards degrades performance. Overall performance data and scale are unknown.

- Memory operations: Memory is constructed continuously: decisions from meetings, chats, and tickets are captured, indexed, and linked into a timeline, while exceptions and precedents are turned into searchable institutional knowledge. It also supports automated reminders, status‑report generation, and proactive misalignment‑detection, so memory operations are tightly coupled with operational workflows.

- Governance: The documents indicate governed knowledge graphs, role‑based retrieval filters, and “documented decision‑making” as core commitments. SOC2 and ISO 27001 compliant with a VPC option.

Sentra.app more closely aligns with the KMC blueprint in an enterprise context. It explicitly aims to turn spoken and scattered interactions into a structured, searchable, continuous memory, which is much closer to a real cognition engine. What it doesn’t yet fully be is a fully multimodal, enterprise‑wide nervous system that absorbs images, rich product telemetry, sensors, and broad customer‑driven learning. The performance and scale guarantees of this potentially file backed system are also unclear.

Supermemory

supermemory.ai . SaaS and VPC.

Supermemory is a custom vector-graph memory engine with rich data-ingestion, strong benchmarks, and flexible deployment.

Characteristics We Know

- Multimodality: Supermemory ingests PDF, DOCX, TXT, MD, CSV, XLSX, HTML, images, audio, video, and URLs, then extracts and embeds meaningful content from each type. That makes it multimodal in terms of ingestion, though reasoning is still text-based rather than image-generation-centric.

- Cognitive architecture: It is built as a human-like memory layer with smart-forgetting, decay, prioritization, and relational memory-versioning (updates, extends, derives), so facts can evolve over time instead of being overwritten. You can query based on a user context and carry that information across sessions. While the knowledge base can be ingested for any user, it seems to be geared towards internal knowledge vs. something related to your product or organization.

- Knowledge graph and search: The core is a custom vector‑graph engine that combines similarity search with ontology‑aware, temporal‑aware edges to answer “who knew what, when, and how it changed” rather than just “find similar chunks”. Search is hybrid: vectors, keyword‑style matching, and graph traversal are fused, with reranking tailored to memory‑style queries.

- Storage backend: Supermemory exposes a self-hosted, managed API layer, but the underlying DB choices are not public; it is a proprietary vector-graph stack abstracted behind the service. The docs confirm it handles 100B+ tokens per month with <300 ms per query, which implies a heavily tuned backend, but enterprises cannot swap or introspect the DBs directly.

- Sharing model: The memory engine is user‑ and agent‑centric, supporting multi‑user and multi‑session applications, but it is not marketed as a full‑org‑wide “collective mind” layer. Teams and agents can share memory contexts, but organizational‑scale governance and workspace structures are less prominent in the public materials.

- Performance and scale: On LongMemEval-s, Supermemory reports ~81–85% overall and up to ~99% on newer runs, with strong marks in temporal-reasoning and multi-session evaluation. It also claims <300 ms latency though the scale of data is not mentioned. The vector-graph engine explicitly supports temporal reasoning and multi-session recall, which is why it scores so well on LongMemEval-style benchmarks.

- Memory operations: Supermemory doesn’t just store content but it transforms it into optimized, searchable knowledge. Every upload goes through an intelligent pipeline that extracts, chunks, and indexes content in the ideal way for its type. It combines hybrid memory and RAG. Use container tags and metadata to organize and retrieve memories.

- Governance: Governance is implicit: the human‑like decay, smart‑forgetting, and versioning help keep memory consistent and efficient, but the docs do not yet detail granular, compliance‑style policies like retention, masking, or EU‑residency. SOC2, HIPAA, GDPR compliant.

Supermemory seems to be a high-performance, enterprise-grade memory engine optimized for employees building and deploying AI agents, with strong support for long-term, relational, and temporal reasoning over internal knowledge, but it remains a somewhat opaque, storage-backend-black-box system that potentially excels at scale though how large its knowledge base can get is not specified. The ingestion, while multimodal, the search relies on extracted structured information. It’s unclear how you can merge customer side with internal business knowledge to build truly contextual, knowledge-based applications.

Zep / Graphiti

getzep.com · Managed SaaS, underlying graph engine – Graphiti – open source

Zep solves the agent context problem by assembling comprehensive, relationship-aware context from multiple data sources like chat history, business data, documents, and app events, enabling AI agents to perform accurately in production. Zep is powered by Graphiti, an open-source temporal knowledge graph framework that enables relationship-aware context retrieval. Zep is the fully managed platform whereas you have to build the actual cognition engine with the Graphiti graph framework.

Characteristics We Know

- Multimodality: Text and structured business data. No discussion around image/complex documents/audio/video.

- Cognitive architecture: Temporal knowledge graph. LLM generated context added to chunks that are stored in memory. Zep excels at building knowledge graphs from streaming data that evolves over time. You can add any unstructured text, JSON, or message data to build your knowledge graph. Zep is optimized for evolving data, not static RAG. You can add user and session data, timestamps, metadata for episodes to introduce context beyond content of the documents.

- Knowledge graph & search: Graphiti-powered temporal KG. Hybrid search: semantic + BM25 + graph traversal. Result reranking via RRF, MMR, episode-mentions, node distance. Property-level and exclusion filters. User graph is separate from session or object knowledge graph but connected so you can see all activities from a user.

- Storage backend: Backed by Graphiti which in turn can use Neo4j, FalkorDB, Kuzu, AWS Neptune for the property graph and semantic search support.

- Sharing model: RBAC permissions at account or project scope let you grant the right level of access to each teammate while keeping sensitive account actions limited to trusted users. RBAC grants permissions through roles, and every member can hold multiple assignments across the account and individual projects.

- Performance & scale: Zep claims sub-200ms retrieval at scale and promotes production-ready performance guarantees in the managed platform. Graphiti is the more custom path: performance depends much more on your own deployment, data design, and indexing choices.

- Memory operations: Both support incremental updates and historical tracking, so they are strong on add/update behavior and point-in-time reasoning. The temporal model is a real advantage for memory correction and change tracking.

- Governance: Zep provides comprehensive security controls and compliance capabilities designed for enterprises handling sensitive data. Audit and API logging. SOC2 / HIPAA compliant.

Zep is an enterprise ready cognition framework with the missing piece being multimodality if we are aiming for human-like colleagues from AI. It also focuses less on existing knowledge base (from our KMC framework) and is more about memory and context, not solely in the realm of coding agents.

Agent Frameworks with Memory

(in alphabetical order)

Memory is one component of a broader orchestration system — not the core value proposition. Most enterprise teams first encounter memory through these frameworks.

Agno + Agno Dash

agno.com · GitHub: agno · GitHub: Dash · Open source (Apache 2.0)

Agno Memory is Agno’s built-in persistent memory layer that automatically captures, stores, and retrieves agent-user interactions, preferences, and facts in your chosen database (SQLite, Postgres, MongoDB, etc.), while Dash is a self-learning data agent that uses that memory to answer SQL-backed questions grounded in six layers of context and gets better over time.

Characteristics We Know

- Multimodality: Neither Agno Memory nor Dash emphasize multimodal ingestion; they are focused on text‑based, SQL‑backed data and chat history. There’s no indication that Dash ingests or stores images, audio, or other rich media; it’s a text‑ and SQL‑first agent sitting atop text‑based memories.

- Cognitive architecture: Agno Memory is a lightweight, persistence‑layer‑style memory engine that stores agent–user context and preferences in your choice of DB (SQLite, Postgres, MongoDB). Agno defines user profile, entity memory, and session context. Dash is a self‑learning data agent built on top of that memory, using stored patterns and corrections to refine its SQL‑backed Q&A.

- Knowledge graph & search: There is no explicit knowledge‑graph layer; memory is stored as relational or document‑style records, not as a queryable semantic graph. Dash uses a six‑layer context stack (schema, docs, examples, memories, etc.) but does not expose graph‑style or ontology‑aware retrieval.

- Storage backend: Everything an agent persists lives in a db object: sessions, memory, knowledge, traces, schedules, approvals. The interface is identical across backends. Pick from JSON files (local or cloud), embedded (SQLite), relational (Postgres, MySQL), document (MongoDB), key-value (Redis, DynamoDB, Firestore), or distributed (SingleStore). Dash seems to primarily rely on Postgres.

- Sharing Model: Memory is scoped to agent‑instances and teams, but there is no explicit organization‑wide memory graph or governance layer (roles, retention policies, compliance‑style controls). It’s more about developer‑managed memory per agent than a centrally governed, cross‑platform memory bus.

- Performance & scale: No published memory benchmarks. Agno framework updated April 14, 2026; Dash updated April 8, 2026 (both actively maintained).

- Memory operations: Memory operations are simple and explicit: agents can create, update, delete, and recall memories, and when update_memory_on_run=True Agno auto‑populates user preferences. Dash leverages these operations to remember mistakes, corrections, and successful patterns, so it improves its SQL‑generation and reasoning over time.

- Governance: JWT-based RBAC, per-endpoint authorization by JWT scopes. Dash: every query scoped to user_id. No published org/department hierarchy. AgentOS offers more in this space like guardrails, human-in-the-loop validations, observability, but unclear on sharing policies across projects and organizations.

Agno Memory is one of the features that the Agno framework (now evolved to AgentOS) offers. It’s a simple memory interface that captures user profile, entity, and session information. Dash takes an agent-centric approach and introduces context. It’s mainly focused on internal usage and development with a focus on text and SQL information. Performance and scale are tied to the backends configured by the users.

CrewAI

crewai.com · GitHub · Open source + enterprise platform

Multi-agent orchestration framework. CrewAI implements a sophisticated memory-management system that provides AI agents with access to shared short-term, long-term, entity and contextual memory.

Characteristics We Know

- Multimodality: Memory is text‑based and LLM‑driven: it stores and reasons over linguistic facts, task outputs, and user‑scoped records; images, audio, and video are handled via tools or RAG pipelines outside the Memory class rather than natively inside the memory layer.

- Cognitive architecture: Memory is designed as an LLM‑driven pipeline: memory.remember(...) analyzes, encodes, and resolves contradictions in a fact, consolidate compresses and organizes overlapping or conflicting records, recall retrieves context with composite‑score ranking, extract / forget summarize and prune lower‑value entries.

- Knowledge graph & search: There is no explicit knowledge‑graph layer with edges, ontologies, or graph‑traversal APIs; instead, memory is organized as a hierarchical tree of scopes (e.g., /project/x, /agent/researcher/notes), and you can inspect that tree via tree(), list_scopes(), and info(). Search is semantic‑plus‑scope‑aware recall over this hierarchy, more like a structured, scoped document store than a navigable graph.

- Storage backend: Memory runs on configurable backends (e.g., SQLite3, Chroma‑style RAG‑integrations), but the framework exposes a unified Memory class; you configure scoring, decay, and scope behavior while the framework wires the underlying DB, striking a balance between raw‑DB control and framework‑managed storage.

- Sharing model: Memory is scope‑ and role‑scoped: you can attach a Memory instance to an Agent, a Crew, or a Flow, and facts live under hierarchical paths like /project/alpha or /agent/researcher/notes. Multiple agents can share a memory pool but interpret it differently via scope‑paths and recall‑settings, giving you a shared‑memory substrate with role‑tuned views rather than a flat org‑wide bus.

- Performance & scale: By auto‑consolidating, compressing, and pruning facts, the system avoids the “memory bloat” trap, so later runs are faster, cheaper, and more reliable; this is baked into the memory design, but the docs don’t publish explicit “N‑billion‑tokens”‑style SLAs.

- Memory operations: At the API level you have: memory.remember(...) – analyze, encode, and consolidate a fact, with LLM‑driven contradiction‑resolution; memory.recall(...) – retrieve records from a scope‑tree path with adaptive‑depth, composite‑score search; extract / forget – summarize and decay lower‑value entries so the system stays compact and useful. You can also inspect the structure via tree(), list_scopes(), and info(path), which reinforce the “file‑system‑style hierarchy” model rather than a graph‑style model.

- Governance: The memory system reasons about its own contents (importance, contradictions, decay) and trims low‑value entries, but it does not expose rich, compliance‑style governance (retention tiers, consent flags, data‑lifecycle hooks); you layer those on top via the underlying DB and app‑logic, so governance is secondary to the memory‑fabric itself.

CrewAI’s Cognitive Memory is a knowledge‑rich, agentic memory layer that organizes, compacts, and evolves what agents “know” over time, using LLM‑driven operations (remember, consolidate, recall, extract, forget) instead of just logging raw chat history. It’s a high‑level memory fabric tightly coupled to Agents, Crews, and Flows, but currently unimodal: text‑based rather than multimodal. The reliance on databases does make its performance and governance dependent on chosen databases.

LangGraph

langchain.com/langgraph · GitHub · Open source (MIT)

LangGraph memory is a transparent, state‑first memory layer for agents: it gives you short‑term thread‑scoped state plus long‑term, cross‑session storage via a pluggable Store, but it expects you to wire the DB, schema, and search logic yourself.

Characteristics We Know

- Multimodality: LangGraph memory is text‑and‑state‑based: it reasons over messages, tools, and structured records, not over raw images, audio, or video, so “cognition” here is semantic and state‑based, not multimodal perception.

- Cognitive architecture: It provides primitives for a flexible cognitive architecture—short‑term working memory (State/checkpoints) plus long‑term, cross‑session Store‑based recall—but you must design the “cognitive flow” (reflection, self‑critique, planning, memory‑pruning) as part of your graph, rather than getting a pre‑built cognitive‑architecture product.

- Knowledge graph & search: Memory is attached naturally to the graph: nodes can read/write state (short‑term) and read/write into the Store (long‑term), so you build memory‑aware nodes that explicitly store facts, preferences, or summaries and inject them back into prompts.

- Storage backend: You can wire different backends (Redis, in-memory, custom DBs) into the Store, and LangGraph exposes semantic / vector-enabled search over those stored memories, often by using embeddings and a vector-enabled store such as Redis.

- Sharing model: Sharing is namespace-driven - you store data under keys like ("user_123", "memories") or ("app_x", "global_facts"), so you can build per-user, per-team, or per-app memory views, but there is no built-in “org-wide memory bus” with roles or relationships; sharing is controlled by your app logic and DB permissions.

- Performance & scale: Cloud-scale via PostgreSQL and MongoDB backends. No published memory retrieval benchmarks.

- Memory operations: LangGraph exposes explicit operations—store.put/aput, store.get/aget, and store.search/asearch, for storing and retrieving user‑level facts and summaries across threads, while the graph State acts as a short‑term working‑memory surface checkpointed per‑thread. You are expected to design trimming and compacting patterns yourself: summarizing long exchanges into compact memories, attaching metadata for time‑ and usage‑based decay, and selectively injecting only a small set of semantically relevant memories into prompts, so long‑term context is rich but not wasteful.

- Governance: Durable execution with full replay capability. Human-in-the-loop checkpointing. LangSmith tracing and observability. Time-travel debugging across graph state checkpoints.

LangGraph memory is a feature inside the agent framework; you assemble it using checkpoints, Store, and your own schema, rather than getting a managed, black-box-style memory layer. It’s missing multimodality that’s characteristics of humans and the scale is very much tied to the chosen storage backend.

Lyzr AI Cognis

lyzr.ai · Memory within Lyzr AI framework

Cognis is Lyzr’s production-grade memory layer for AI agents — giving every agent the ability to recall what matters, update knowledge on the fly, and stay consistent across every conversation, session, and deployment.

Characteristics We Know

- Multimodality: Cognis is text‑only: it ingests conversation messages, extracts facts, and returns structured text‑based memories; it does not process images, audio, or video directly.

- Cognitive architecture: It uses a three‑tier architecture (immediate, active, archival recall) to mirror short‑term, mid‑term, and long‑term memory, and it maintains consistent, evolving knowledge by automatically deduplicating and updating facts. That is closer to a “human‑like” memory pattern.

- Knowledge graph & search: Cognis employs hybrid search via Matryoshka embeddings + BM25 keyword search, fused with Reciprocal Rank Fusion and temporal‑recency boosting, including “when‑aware” queries. The result is structured, compact, and relatively low‑noise recall, with a graph‑like feel but not full‑blown relational‑reasoning over an explicit ontology.

- Storage backend: The core stack is Qdrant (vector search) + SQLite FTS5 (local‑file‑backed full‑text index) for the open‑source edition, with the hosted version layering on OpenSearch, MongoDB, Neo4j, and Qdrant.

- Sharing model: Memory is scoped by owner_id, agent_id, and session_id, so Cognis cleanly separates user‑specific, agent_specific, and session_specific memories. It can support multi‑user and multi‑agent flows, but the design is more per‑user/per‑agent than a single, org‑wide “collective mind” graph.

- Performance & scale: The open‑source version reports around 500 ms search latency with a very light footprint; the hosted version claims sub‑300 ms latency and strong benchmark numbers (e.g., 85.9% on LoCoMo, 92.4% on LongMemEval).

- Memory operations: Cognis supports add, get, search, update, and delete for memories, with attentive ingestion logic: before storing a new fact, it retrieves similar memories and decides whether to add, update, delete, or skip, resolving contradictions at write time. It also manages short‑term (session‑scoped) + long‑term (persistent, cross‑session) memory, and combines them into a ready‑made LLM context block.

- Governance: Memory is scoped. Hosted version is multitenant. Audit logging and monitoring support are unclear.

Cognis is a text-based, agent specific memory framework where the overall performance and scale will depend on how the backend is scaling. It follows the contextual retrieval part closely but does miss out on the human-like multimodal requirement and also does not capture existing knowledge base well.

Mastra

mastra.ai · GitHub · Open source + SaaS

Mastra is an open-source TypeScript framework for building AI-agent applications. Lower token usage and latency without losing important context. It automatically extracts and stores observations from every conversation, then injects relevant context into future requests. Your application does not need any memory management code.

Characteristics We Know

- Multimodality: Mastra is primarily text‑centric: it’s built around LLMs, tool calls, and structured workflows; there’s no explicit multimodal ingestion pipeline into the memory layer, though you can wire vector‑store‑based RAG around documents, images, etc into the framework itself.

- Cognitive architecture: Mastra offers a structured cognitive pattern: Working memory for persistent, structured user data (names, preferences, goals), Semantic recall for RAG‑style retrieval of past messages, Observational memory (a “observation‑agent” layer) that summarizes and maintains a dense log instead of raw history. The Gateway is how you trigger and inspect that architecture, even though you still design the agent-logic side yourself.

- Knowledge graph & search: There is no explicit graph‑query API, but semantic‑recall and RAG‑style memory lean on vector‑based search over message‑derived embeddings (Pinecone, Chroma, PG‑vector, etc.).

- Storage backend: Storage is adapter‑based and transparent by default (e.g., PostgresStore, MemoryLibSQL, UpstashStore), and you pass the store to Mastra or individual agents.

- Sharing model: Memory is thread‑ and resource‑scoped (per‑thread or per‑user), and the platform is project‑ and org‑based, so observability and memory traces are shared across your team’s Studio views.

- Performance & scale: Mastra’s Observational Memory rewrites the “performance and scale” story for agent memory: instead of brute‑force long‑context or retrieval‑heavy RAG, it uses an observer‑reflector pattern that compresses raw history into a dense, dated observation log, achieving 5–40× conversation‑history compression while keeping a small, stable, cacheable context window. On LongMemEval, this architecture scores 84.23% with GPT‑4o and up to 94.87% with GPT‑5‑mini—state‑of‑the‑art by a wide margin—and cuts token costs by up to 10× through aggressive caching, which makes it extremely scalable for production‑grade, long‑term agents. Ultimate performance will depend on storage and retrieval backends.

- Memory operations: Mastra’s memory operations combine structured working‑memory writes, RAG‑style semantic‑recall over message history, and observational‑memory trimming into a single, agent‑wired model, with the Gateway exposing thread‑level CRUD and observation‑history views. Together they let you store, selectively retrieve, and compact long‑term context so prompts stay small while preserving recall across sessions.

- Governance: The Gateway + Studio combo gives you tracing, evals, identity‑based projects, and input‑output inspection at the platform layer. However, there is no deeply prescriptive, compliance‑first memory‑governance engine; you still layer on your own DB‑level retention, encryption, and consent‑style policies atop the Mastra‑provided adapters and Gateway APIs.

Mastra’s Memory Gateway is a managed, RAG‑aware memory door for agents backed by a multi‑tier memory model (working memory, semantic‑recall, observational memory) and storage‑adapter‑driven persistence. It’s ability to bring human-like knowledge, multimodal capabilities together with memory are limited but it’s focus is on the agentic framework and the idea of compressing memory without disrupting user workflow is preferable.

Model-Native Memory

(alphabetical order)

Memory tied to a specific provider’s product. It’s difficult to use OpenAI memory with Claude or vice versa without going through complicated hoops in transferring from one to another and it can be lossy. We have included these examples because they define the baseline most enterprise teams encounter first.

Claude Memory

anthropic.com · Memory tool docs · API (beta) + Claude.ai · Managed

Claude’s Memory tool is an agent‑facing, filesystem‑style memory layer exposed via the Claude API: Claude makes structured tool calls (create, read, update, delete) on a /memories directory, while your application executes the actual file operations, so storage is client‑owned, transparent, and customizable (disk, DB, cloud, encrypted). Claude also offers a separate, user‑facing chat‑memory and search layer (summarized profiles and search‑over‑chats), but that is managed and opaque; the analysis below focuses on the Memory tool, which is more relevant for agentic workflows.

Characteristics We Know

- Multimodality: Memory and chat search are text‑only. Claude summarizes and retrieves from your conversational history; there is no explicit multimodal embedding or perception layer for images, audio, or video inside this user‑facing memory feature.

- Cognitive architecture: The system implements a two‑tiered cognitive pattern: Memory as a synthesized profile of patterns, preferences, and recurring context, updated every 24 hours; Chat search as an on‑demand retrieval over raw chat transcripts when you phrase it as a “find what we discussed” question. This is closer to a consumer‑style continuity layer than to a multi‑storage, agentic‑cognition product. Context comes from information being associated with a user for a project. Knowledge comprises the components of the project in which Claude is being used.

- Knowledge graph & search: There is no explicit knowledge‑graph API; “memory” is a flat summarized profile, and “chat search” is a tool‑driven search over conversation logs, not a graph‑of‑entities or ontology‑aware engine. Search is context‑boundary‑aware (project vs non‑project), but still just text‑based retrieval, not graph‑style traversal.

- Storage backend: The backend is explicitly client‑owned and filesystem‑oriented: Claude only makes tool calls; your app executes file operations in the /memories directory and can back it with local disk, a database, cloud storage, or encrypted files; there is no opaque, managed‑only storage layer here.

- Sharing model: Sharing is directory‑ and project‑scoped: multiple agents or sessions can read and write to the same /memories directory, or you can wire shared storage (e.g., mounted volumes, shared object store, Git‑synced memory‑files) so agents reuse a common memory substrate. There is no built‑in cross‑user or org‑wide memory graph; sharing is achieved via shared storage paths or external tools, not a first‑class sharing model in Claude itself.

- Performance & scale: The system is window‑conscious and selective: it doesn’t just keep loading everything; instead, it synthesizes useful patterns and only searches on‑demand, which keeps token‑costs and latency reasonable for chat‑style workflows. There are no published “N‑billion‑tokens”‑style SLAs; performance is baked into the “summarize + search‑when‑asked” model rather than a separate memory‑bench layer.

- Memory operations: At the tool‑level you have explicit CRUD‑style operations over the /memories directory: create / read / update / delete files, optional writeIndex‑style index‑management if your backend implements it, agent‑driven decisions about when to create, overwrite, or prune memories. In practice, this is code‑plus‑file‑system style: you wire the tool into your agent runtime and treat /memories as your persistent, inspectable knowledge‑repo.

- Governance: The Memory tool assumes a client‑owned, filesystem‑style governance posture: Anthropic provides the protocol and the ZDR guarantee for their infra, but you are responsible for storage‑level safety, access‑control, encryption, retention, and audit‑style practices; there is no built‑in compliance‑layer inside the memory protocol itself, so governance lives entirely in your backend and app‑logic rather than in the tool. For chat search, incognito mode doesn't contribute to memory, doesn't appear in your chat history, and can't be searched later.

In short, you can use Claude's Chat Search to find specific information mentioned in a conversation weeks ago, and Claude's Memory Feature/Tool to ensure Claude knows your preferred programming language, tone, or project constraints without mentioning them in every new chat. However, it’s not necessarily built for tracking with the KMC blueprint we established for enterprise cognition.

OpenAI Memory

openai.com · Codex feature

OpenAI’s memory story spans both ChatGPT and Codex, but for agentic and developer workflows, Codex memories are the more relevant model: they preserve useful context from prior threads into local memory files, while ChatGPT memory is primarily a user-profile personalization layer.

Characteristics We Know

- Multimodality: Codex memories are text‑based and code‑project‑oriented: they store summaries, durable entries, recent inputs, and supporting evidence from earlier threads as markdown‑like, unencrypted files, not raw images, audio, or rich embeddings.

- Cognitive architecture: Codex memories implement a two‑stage, LLM‑driven memory pipeline (extract_model → consolidation_model) that distills completed, useful threads into durable, project‑scoped entries, while skipping active or short‑lived sessions; this makes memory a lightweight, persistent‑state layer for coding‑style agents rather than a general‑purpose, flat‑log‑style store.

- Knowledge graph & search: There is no explicit knowledge‑graph API; memories are stored as plain files under ~/.codex/memories/, and you can inspect or delete them directly, but they are not wired as a navigable graph of entities and edges. You can ask Codex to “search” or recall them, but the underlying mechanism is file‑based, text‑search‑style lookup, not a graph‑traversal or ontology‑aware engine.

- Storage backend: Storage is local, filesystem‑based and developer‑inspectable: Codex writes memory files into your Codex‑home directory (typically ~/.codex/memories/), so you can review, archive, or share them, but they remain under your own control rather than a managed, black‑box‑style backend. You can also layer external memory layers (e.g., Hindsight, Mem0) on top, but those are not part of Codex’s built-in memory system.

- Sharing model: Sharing is local‑filesystem and project‑scoped: a Codex‑based workflow usually shares memory via: a shared Codex‑home directory (for teams sharing the same dev environment), or external MCP‑style memory layers that plug into multiple agents.

- Performance & scale: The system is not benchmarked in “N‑billion‑tokens” terms; instead, performance is baked into the “compact‑and‑reuse” design of local, generated‑file‑style memories, which keeps token‑costs and latency down for coding‑style workflows.

- Memory operations: Codex manages when and which threads become memories: it skips active or short‑lived sessions, redacts secrets, and runs updates in the background to avoid touching work in progress, so the memory layer scales with the project without blowing up the LLM context window.

- Governance: The docs explicitly warn developers: don’t store secrets, treat memory files as generated state, inspect them before sharing, and keep hard‑policy rules in checked‑in docs like AGENTS.md instead of relying on memory‑only. There is no rich, compliance‑style governance engine (retention tiers, audit trails, consent‑tags) built in; you layer those on top via your own file‑management and external memory tools, or rely on local‑only, non‑compliant‑system‑style governance.

Codex memories are a lightweight, filesystem‑oriented memory layer for developer‑agents, where prior‑thread context is distilled into local, editable files that future sessions can reuse without re‑prompting, and where you retain control over what is stored and shared. The focus here is not to create a cognition infrastructure to incorporate knowledge base, org-wide general multimodal memory, or establish rich context.

Specialized / Research

(alphabetical)

MemPalace

MemPalace is the open‑source, local‑first memory layer created by Milla Jovovich and Ben Sigman that aims to give Claude‑style assistants a structured, persistent memory layer backed by Chroma and SQLite, storing full conversation history in a navigable, mind‑palace‑inspired tree. MemPalace is an experimental project that applies the classical "Method of Loci" (Memory Palace) to large language models by giving them a spatial data structure to navigate. The goal is to provide a visual and spatial anchor for information, which drastically improves retrieval accuracy in long-context scenarios.

What’s special to learn from MemPalace is its local‑first, verbatim‑storage philosophy and its extreme‑compression / AAAK‑style pipeline that tries to keep every token but structure it so it’s still usable, plus its viral‑but‑scrutinized benchmark‑first approach; it’s a good example of how easy it is to over‑claim on LongMemEval‑style scores, but also how powerful purely local, transparent‑to‑the‑user memory can be for teams that care about privacy and control. It demonstrates the power of spatial-relational indexing; by treating data points as "objects" in a physical room, it proves that giving an agent a sense of "location" within its data can reduce hallucinations and improve the precision of multi-step reasoning.

MemoryOS

MemoryOS is a research-centric framework designed to simulate the "operating system" of human memory by categorizing data into episodic, semantic, and procedural layers. Its primary goal is to move AI beyond a flat knowledge base by introducing a tiered storage architecture that mimics how humans prioritize recent events versus long-term facts. MemoryOS aims to be a hierarchical “memory operating system” for AI agents, providing four modules—Storage, Updating, Retrieval, and Generation—that manage short‑term, middle‑term, and long‑term memories so agents don’t re‑ask things they already know. In its core implementation it’s positioned as a general‑purpose memory layer that can connect to MCP‑compatible assistants via its server implementation.

What’s special to learn from MemoryOS is its explicitly tiered, OS‑style architecture (STM/MTM/LPM) and its design as a universal, pluggable memory layer rather than a one‑off RAG‑plugin; it shows how you can factor memory into clean components (storage, update, retrieval, generation) and wire them into diverse agents while still exposing a single, shared memory surface.

What Comes Next?

This landscape reveals a clear divide: while almost every framework can store data, very few have mastered the Connective Tissue—the seamless synthesis of Knowledge, Memory, and Context required for human-level reasoning.

However, storage and retrieval are just the foundation. The real frontier we are moving toward is Correctness: ensuring the agent provides the right answer at the right time, within the right constraints. True Cognition isn't just about fetching a vector; it’s about the agent having the "judgment" to navigate a complex decision trace, reconcile conflicting facts, and understand the nuance of the current mission.

We are moving away from "Digital Attics" toward Dynamic Reasoning Engines. Some of the frameworks we’ve analyzed are already laying the bricks for this future, but the integration remains the final hurdle.

In the final part of this series (Part 2c: The Architect’s Playbook), we shift from the "What" to the "How." We will share our key observations from this study, provide a Decision Matrix for selecting your memory stack, and list the critical questions every architect should ask before committing to a framework.

Ali Nahm is a Partner at Tribe Capital. aperture-nexus is built by ApertureData. Both authors have positions in this landscape. We’ve disclosed them in the framework scoring and welcome challenges to our assessments.

This blog was improved with feedback from Sonam Gupta, Sr. Dev Advocate at Telnyx

Sources

Searches supported by Gemini, Perplexity, Claude, and good old fashioned reading of the following (not comprehensive) list of documents:

- Mem0 ECAI 2025 paper (arXiv:2504.19413)

- Zep paper (arXiv:2501.13956)

- Graphiti GitHub

- Cognee GitHub

- Hindsight benchmarks

- MemMachine launch

- Prem Cortex GitHub

- RippleTide

- Sentra funding (SiliconANGLE)

- Lyzr Amazon AgentCore Memory

- Agno GitHub

- Agno Dash GitHub

- LangGraph 1.0

- Mastra GitHub

- CrewAI GitHub

- OpenAI memory controls

- Claude memory tool docs

- MemoryOS paper (arXiv:2506.06326)

- MemPalace GitHub

- Zep vs. Supermemory

.jpeg)

.png)