Part 2c: Lessons from 20 Frameworks and How to Choose

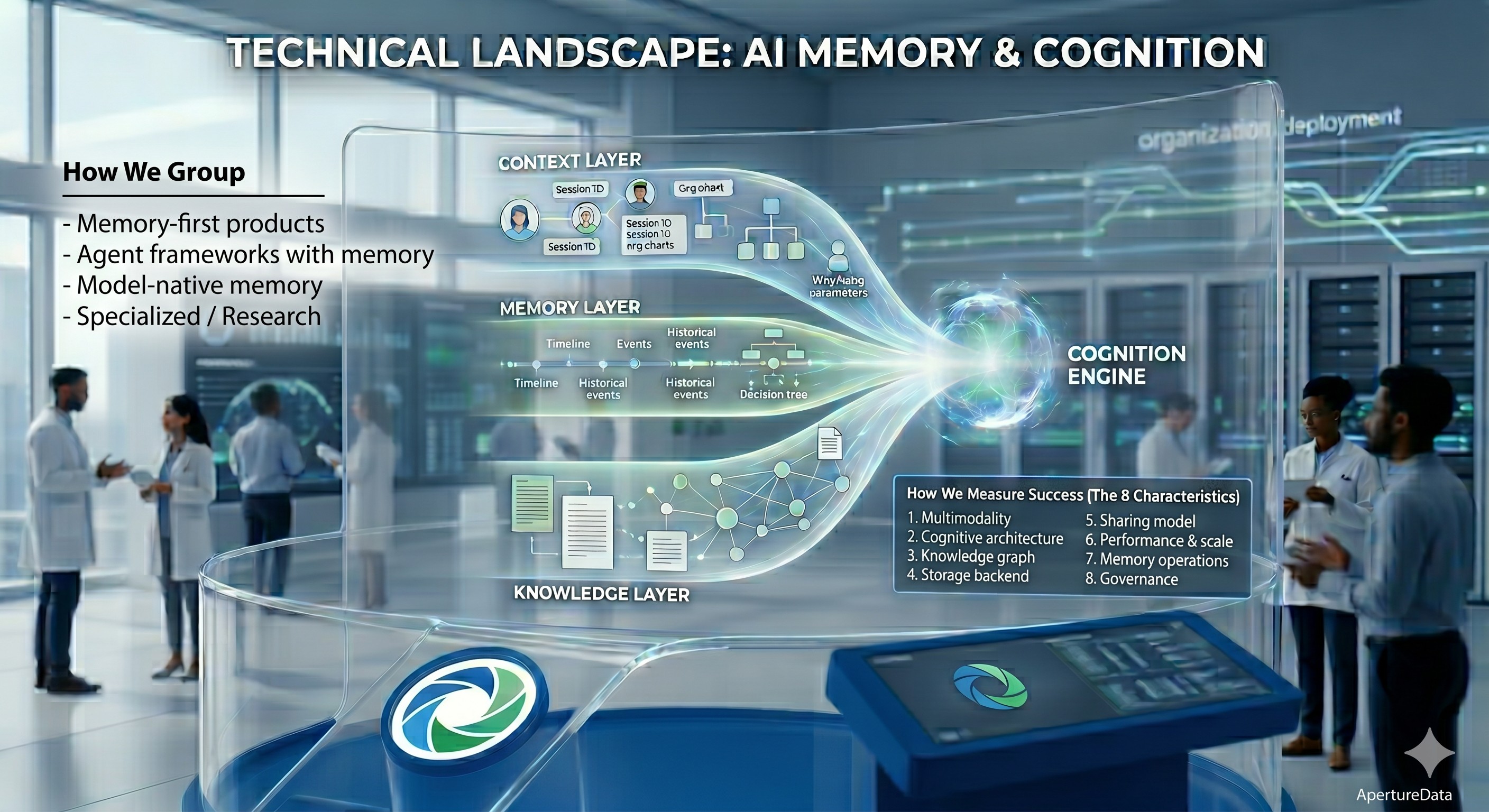

Machine Cognition isn’t a new concept, it’s as old as AI itself. But as we move from research papers to production, we are finally defining it in real, executable terms. After auditing 20+ frameworks across four categories—memory-first products, agent frameworks, model-native memory, and research—several things became clear that benchmark tables do not convey.

Context: This Series So Far

This is Part 2c of our four-part Deep Dive on AI Memory and Cognition:

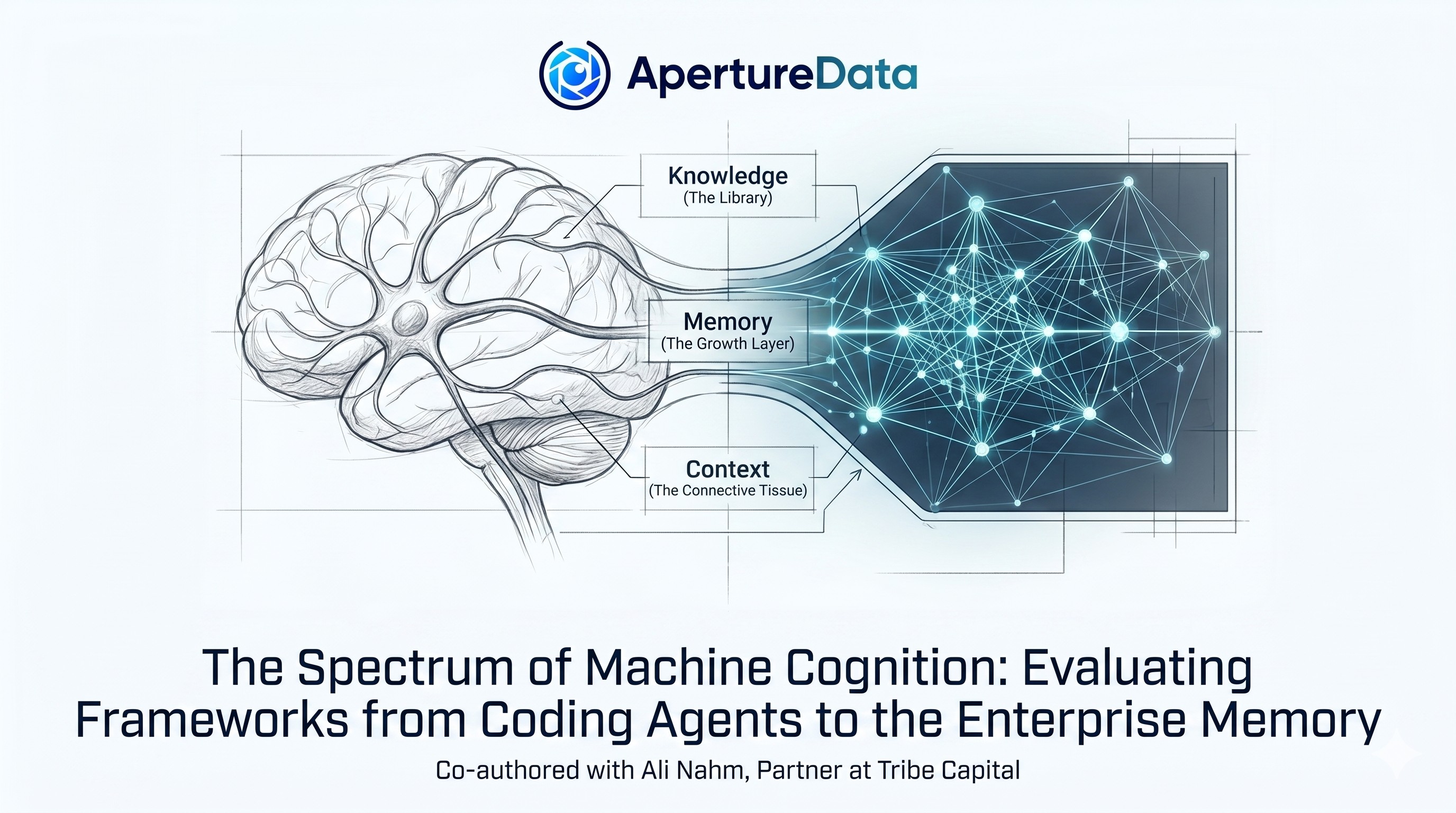

- Part 1: Human Memory, the Perfect Template for AI Memory introduced the organizational cognition model based on our learnings and observations of human cognition in such settings.

- Part 2a: The Spectrum of Machine Cognition established the KMC (Knowledge-Memory-Context) blueprint mapping human understanding and operating model required to support AI cognition. It also laid out the eight evaluation criteria for a solution to work in an organizational setting.

- Part 2b: AI Memory & Cognition Landscape: Deep Dive provided a detailed framework-by-framework analysis of 20+ memory systems and how they measure against the KMC standard.

This post synthesizes the findings into a playbook for architects choosing the cognitive infrastructure for their organization.

The Cognition Gap: Why Models Alone Fall Short

Everyone knows this by now: frontier LLMs like Claude, GPT-5, Gemini, are very powerful but incomplete. Even with some of them supporting 10M-token windows, they hit a functional ceiling: they cannot maintain a persistent, evolving state of your organization’s reality.

As we established in Part 1, human intelligence doesn’t come from a single processing engine. It emerges from a blend of three things: Knowledge (what we know exists), Memory (what we’ve learned and refined), and Context (who we are, where we are, why we’re acting, what have been past outcomes). A person walks into a meeting because context told them it mattered. They retrieve the right fact because memory prioritizes it. They make a decision because knowledge and memory ground them.

Models alone fail because of:

- Context Rot: Stuffing a massive knowledge base into a prompt doesn't scale. It leads to attentional dilution, where the model's reasoning drifts toward "noise" or generic training data instead of your grounding facts. Static context is also often dead context—it cannot self-heal or prune stale data as your business moves.

- Stateless Experience: Every conversation starts at zero. There is no cumulative learning, no refined tribal knowledge, and no organizational memory that survives the end of a session.

- Context void: Models don’t know your org’s constraints, your team’s mission, or why a decision matters to your business.

- Context Window Churn: Even without "long-context surcharges," the math is brutal. In 2026, re-uploading a 1M-token codebase into every prompt for a multi-agent team creates a cost curve that scales with every "thought," not with your system’s actual intelligence.

- Multimodal Amnesia: While models can now "see" natively, they cannot remember multimodally. They process the pixels in the moment, but the visual artifact, e.g. the "blue wire" in your schematic, is discarded the second the session clears.

This is the Cognition Gap. Models are the brain; agents are the action layer; but an organization needs a nervous system.

This is where the 20 frameworks we audited step in. And this is where they diverge sharply.

The Cognition Sprawl Reality: Key Observations From The Landscape Study

Our analysis is based on an extensive review of the technical documentation, API specifications, and whitepapers published by these vendors as of April 2026. While we have not performed live stress-tests on every proprietary system, the architectural patterns, and the gaps they leave, are clearly visible in their design choices.

Most Frameworks Handle Basic Context

Every framework we audited captures some level of context: who did what, in which session. What’s missing is the full decision trace—the ability to say not just “User X did Y in session Z,” but “User X did Y in session Z, within department D, for purpose P, under organizational constraints C, with (preferred) outcome O.”

Session-level context is table stakes. Organizational hierarchy, department scope, and restriction attributes that travel with every memory entry are not. This matters for enterprises that need to ask: “What did the support team know about this customer in Q3?” or “Why did agent A retrieve this fact in this context?”

The implication: If you’re deploying one agent for one user, this gap doesn’t matter. If you’re deploying across teams or even customers, most frameworks will push complexity into the application layer for now.

Memory Operations: Consolidation Exists, but Inconsistently

As humans, we forget things that don’t matter anymore, pull things from our long term storage, or update as we learn new aspects in life. Several frameworks explicitly handle consolidation: Mem0 uses LLM extract+decide at write time. Lyzr resolves contradictions at ingestion. Zep marks facts superseded rather than deleting them. Mastra observes and compresses. CrewAI detects contradictions explicitly and so on.

What’s inconsistent: some frameworks make consolidation a first-class operation. Others leave it to the application layer or require manual tuning. The best practice of maintaining coherence automatically, at scale, is not yet standardized.

The implication: If consolidation matters for your use case, you need to explicitly evaluate how each framework handles it. Don’t assume “it’s there” but understand what’s needed from your application layer or agents.

Multimodal Means Many Things, and Most Aren’t Enough

Most frameworks claim multimodal support but hit the “The Text-Only Ceiling”. Almost all of them mean: “We accept images, convert them to text descriptions, and store the text”. A cognitive layer that can’t actually see or hear operates partially blind, or worse, with the wrong assumptions (e.g. it’s easy to miss sarcasm in transcripts and misunderstand the caller!). For industries where the asset is the evidence (defect photos, surveillance video, scan images), converting to text loses the artifact. For everything else, the performance and fidelity trade-offs matter.

True first-class multimodal storage, where multiple modalities beyond text are stored with native embeddings and retrievable directly, is rare. Memories.ai does this for video. Aperture Nexus does this for documents, audio, images, video, and text in a single connected graph.

The implication: If text-only is a compromise for you, evaluate on actual multimodal capability, not feature claims. It is possible to do it right, and efficiently.

Governance and Accountability: The "Opt-in" Liability

In the current market, Governance is often treated as a "day-two" elective rather than a structural requirement. Instead of building native lineage tracking, audit logging and immutable tracebacks, many frameworks outsource these responsibilities to the underlying storage. Only a few treat accountability as a first-class citizen. RippleTide makes it core. Zep/Graphiti preserves full temporal history. Aperture Nexus makes it structural (relationships across context, session, principal metadata). Most other frameworks offer partial solutions to tracking (git history in Letta, observational snapshots in Mastra) that work if you configure them correctly. But they’re not required.

The implication: Most frameworks treat Governance as "digital debt" to be handled at the application layer. If you are in a regulated industry, you cannot assume the framework provides a native "right to audit."

Enterprise Sharing Models Are Nascent

Most frameworks evolved from personal assistants since that was the first use case. This means enterprise sharing models are still nascent. User-level scoping is universal. Session-level context is common. But true multi-tenant enterprise sharing—where memory is scoped to personal, team, project, agent + human, and organizational levels with structural permissions—is nascent. A few frameworks (Aperture Nexus, Sentra) design for this from the ground up.

The implication: If you’re building enterprise cognition with cross-team sharing, most frameworks could require significant custom work for now unless its in their first design principle. If you’re staying within team or project scope, most will work fine.

Performance & Scale: The Latency Tax of Fragmented State

Frameworks that work fine at 1,000 memories often collapse at 1,000,000. And in production, a “perfect” answer that takes 10 seconds to generate is useless. Real cognition is at least soft real-time and data points can scale to billions if not trillions in some cases.

Many frameworks sit on pluggable backends (one system for episodic memory, another for graphs, a third for session state). Each handoff is a failure point. Each duplicate data source is a consistency problem. The engineering debt compounds before you even know if your knowledge is coherent.

The implication: If your roadmap involves cross-team collaboration or high-velocity decision-making, "pluggable" backends are a performance trap. Prioritize unified engines that eliminate the handoff between memory types.

How to Choose: Three Trajectories

“Which framework is best” is the wrong question. Different frameworks solve different problems. Here are the three clearest trajectories:

Trajectory 1: Personal Developer (Solo Agent)

You’re building an AI assistant or agent for individual use. The agent should remember your preferences, your code style, your project context. Nothing needs to be shared.

What matters: Session persistence, low latency, minimal setup.

What doesn’t matter: Org hierarchy, cross-team sharing, multimodal, governance at scale.

Sweet spot: Claude memory, OpenAI memory, Letta, Agno, Mem0, Mastra, Hindsight, LangMem, are great examples.

Reality check: These tools are mature, simple, and work. If you’re staying here, you’re done evaluating. If you want to incorporate your personal knowledge base, then you might have to incorporate that yourself into these frameworks or look into next level options like Sentra or Nexus.

Trajectory 2: Team Cognition (Coding Teams)

You’re building agents that help teams of engineers, sharing code context, learning from codebase changes, maintaining project-specific knowledge. The agents ultimately serve the team, not just an individual.

What matters: Session state management, multi-agent coordination, contradiction handling (if the same codebase fact changes, the agent needs to know), and asynchronous background memory processing.

What doesn’t matter yet: True multimodality, full org hierarchy, immutable audit trails (though these will matter in a few months).

Sweet spot: LangGraph, CrewAI, Agno (AgentOS), Mastra, Cognee, MemMachine, Mem0, Zep are great candidates.

Reality check: These are pretty mature frameworks right now. Teams are shipping with them successfully. The trade-offs are well-known.

Trajectory 3: Enterprise Cognition (Organizational Memory)

You are building the intelligence for an entire organization. Knowledge is captured across departments, shared between projects, and must remain auditable. These agents represent the company’s collective brain, moving beyond temporary coding sessions into long-term institutional memory.

What Doesn't Matter: Model brand loyalty and "chat-centric" features no longer define the winner; in a high-scale environment, the specific LLM is a swappable commodity, and basic session history is a given.

Flavor A: The Internal Nervous System (Operational Intelligence)

You are optimizing for team alignment and decision history. The goal is to ensure the organization never forgets why a choice was made or what happened in a meeting months ago.

What matters: Fact evolution, institutional capture across tools like Slack and Jira, scale, high-accuracy temporal reasoning to track how internal goals change over time, governance.

Sweet spot: Zep, Hindsight, RippleTide, Cognee, Nexus, Supermemory, Prem Cortex, Mem0, Sentra are great choices.

Flavor B: The Customer-Facing Cognitive Layer (High-Fidelity Experience)

You are building memory for products that interact with users, possibly with internal knowledge, where unstructured data, sophisticated sharing models, and governance are primary requirements.

What matters: Native multimodal storage where documents, images, audio, video can be primary facts, relationships, context, scale, performance, structural multi-tenancy to ensure user data isolation, and sub-second retrieval at global scale.

Sweet spot: Aperture Nexus, Memories.ai, Sentra, Supermemory are options to evaluate.

Reality check: When it comes to organizational intelligence of either flavor, this requires more thinking than a two-line summary, which is what we focus on next.

Doing it Right: Cognition Infrastructure For Organizational Intelligence

The Sequencing That Matters

For an enterprise architect, "ease of use" is a trap if it comes at the cost of structural integrity. When building at organizational scale, evaluate in this order to ensure you solve for Authority (what the system knows) and Governance (who can see it) before you worry about Maintenance (how it maintains itself).

- Knowledge Integration: Can the framework natively ingest your existing knowledge base? An enterprise agent that only remembers what it "learns" in a chat session is an island. It must treat your existing PDFs, SQL databases, and Wikis as the primary source of truth, not just passive reference material.

- Governance: Does it model your organizational hierarchy natively? If you have to build custom code to handle team-level or project-level permissions, you are creating a permanent security liability. Look for frameworks where "Principal Metadata" (who, where, when) is structural, not a bolt-on.

- Storage Architecture: Purpose-built or pluggable backends? Pluggable systems offer flexibility but often lead to the "Split-Brain" problem where your vector search and your graph store disagree. For the enterprise, a purpose-built, unified backend wins on atomic consistency.

- Multimodal Fidelity: Is it truly multimodal, or just "vision-to-text"? In an organization, the "truth" is often trapped in a PDF’s flowchart, a technical diagram, or a video log. If your framework strips away the original context, your agent is blind to the core logic of your business.

- Memory Operations: How does it handle contradictory or superseded facts? Only once the data is integrated and secure should you focus on "self-healing" logic, ensuring that when a project roadmap is updated, the agent doesn't keep hallucinating the old version.

The 3-Point Diagnostic

Before committing to a framework, answer these three questions to separate a "working memory" from an "enterprise nervous system":

- The Retrieval Test: Can my agent explain why it retrieved a specific memory? Not just what was retrieved, but which specific context, session, and principal metadata informed the decision.

- The Consolidation Test: When the agent learns something new, does the system update a coherent knowledge base, or does it just accumulate versions? After six months, do you have one "truth" or three conflicting ones?

- The Lineage Test: Can I trace a decision back to the original source—the raw image, the specific video frame, or the precise document page—or just the text summary extracted from it?

The Most Common Enterprise Mistake

The most frequent failure we see is starting with a developer-targeted framework because it’s easy to prototype, then hitting a structural wall six months into production. The limitations that emerge e.g. no org hierarchy, no sharing model, unbounded contradiction accumulation, and lack of multimodal support, are architectural. They cannot be patched or "wrapped" effectively.

Migrating a production memory store at scale is not a refactor; it’s a total reconstruction. The cheaper path is to spend an extra month evaluating the underlying architecture before you deploy your first agent.

Reality Check: The Unified Infrastructure

Ideally, you want a single backend to provide shared visibility so that internal decisions and customer interactions inform each other in real-time. Building an enterprise nervous system requires native relationships, scalable vector search, strong governance, and first-class multimodal support.

While you can start with specialized tools like Zep for temporal history, Cognee for knowledge graphs, or Sentra for institutional capture, managing a fragmented stack of "memory islands" creates a consistency and performance tax that few organizations can afford.

Aperture Nexus is currently the only option that consolidates these infrastructure components into a single, unified layer. What remains to be seen is how it evolves its "memory operations"—the high-precision correctness and consolidation logic where frameworks like RippleTide or Hindsight currently lead. Choosing this path isn't just about picking a library; it’s about committing to a data architecture that ensures your organization's memory is a coherent whole, not a collection of disconnected snapshots.

What We’re Building: The Case for a Purpose-Built Backend

We have published this landscape because the field needs a clearer language for what memory actually means and what enterprise requirements look like in practice. Our core observation is that the winning frameworks will be those built on the right grounding infrastructure.

Aperture Nexus is early-stage. It’s not trying to be another orchestration layer but the first memory framework built on a multimodal graph-vector engine (ApertureDB) to solve the Integration Tax at the data management level. We built Nexus on ApertureDB, because enterprise cognition requires:

- Multimodal Fidelity: Images, video, and text unified in a single graph. When your agent looks at a PDF, it should see the diagram exactly as your engineers do.

- Performance at Scale: Sub-10ms vector search, 15ms graph lookups on billion-entity datasets, 65,000 conditional adds per second to the graph, eliminating the "integration tax" of jumping between disparate systems.

- Structural Coherence: A system designed to prevent contradictions at the storage layer, ensuring a single "truth" survives over time.

- Organizational Hierarchy: Permissions and sharing models treated as first-class entities in the database, not as flimsy application-layer metadata.

Aperture Nexus is still evolving, particularly in its native reasoning and consolidation logic. However, the underlying infrastructure is already production-proven. We believe this architecture is the only way to meet the requirements of an enterprise moving beyond simple pilots.

Want to see the deep dive into our architecture? Our next blog post will detail the design choices behind ApertureDB and why a unified graph-vector approach is the future of machine cognition.

The Closing

The era of dumping data into a vector store is ending. We’re moving toward Cognitive Enterprises: systems that connect workflows, learn from feedback, and share knowledge across teams. That requires more than a framework. It requires infrastructure built for the problem.

The landscape shows exactly where frameworks excel and where they fall short. The three trajectories show you which path you’re on. The diagnostic shows you where your gaps are.

For teams building true organizational cognition, the path is clear. The build is just beginning.

Special thanks to Ali Nahm for her invaluable guidance in refining the methodology for this study and Sonam Gupta for her review.

.jpeg)

.png)